一、基本环境配置,所有主机均配置

本次系统环境如下,采用1主2从方式部署k8s1.30.0,单个节点内存:10G,硬盘100G

## 系统版本

root@k8s-master01:~# hostnamectl

Static hostname: k8s-master01

Icon name: computer-vm

Chassis: vm

Machine ID: 0611b7b27b2f478b9c47fc8d272b9117

Boot ID: 34721bf2fab246b990ad21a7cd8355ee

Virtualization: kvm

Operating System: Ubuntu 22.04.4 LTS

Kernel: Linux 5.15.0-116-generic

Architecture: x86-64

Hardware Vendor: QEMU

Hardware Model: Standard PC _i440FX + PIIX, 1996_

## 系统内存

root@k8s-master01:~# free -h

total used free shared buff/cache available

Mem: 9.7Gi 1.0Gi 5.3Gi 2.0Mi 3.3Gi 8.4Gi

Swap: 0B 0B 0B

## 系统磁盘

root@k8s-master01:~# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

loop0 7:0 0 63.9M 1 loop /snap/core20/2105

loop1 7:1 0 64M 1 loop /snap/core20/2379

loop2 7:2 0 87M 1 loop /snap/lxd/27037

loop3 7:3 0 87M 1 loop /snap/lxd/29351

loop4 7:4 0 40.4M 1 loop /snap/snapd/20671

loop5 7:5 0 38.8M 1 loop /snap/snapd/21759

sda 8:0 0 100G 0 disk

├─sda1 8:1 0 1M 0 part

├─sda2 8:2 0 2G 0 part /boot

└─sda3 8:3 0 98G 0 part

└─ubuntu--vg-lv--0

253:0 0 98G 0 lvm /配置主机名和解析

# k8s-master01、k8s-worker01、k8s-worker02上

sudo hostnamectl set-hostname k8s-worker01

sudo cat <<EOF>> /etc/hosts

192.168.11.26 k8s-master01

192.168.11.27 k8s-worker01

192.168.11.28 k8s-worker02

EOF配置时间同步

# k8s-master01、k8s-worker01、k8s-worker02上

sudo timedatectl set-timezone Asia/Shanghai

sudo apt install ntpdate -y

sudo ntpdate ntp.ubuntu.com禁用swap分区

# k8s-master01、k8s-worker01、k8s-worker02上

sed -ri 's/.*swap.*/#&/' /etc/fstab关闭防火墙

# k8s-master01、k8s-worker01、k8s-worker02上

sudo systemctl disable --now ufw配置ulimit

# k8s-master01、k8s-worker01、k8s-worker02上

ulimit -SHn 65535

cat >> /etc/security/limits.conf <<EOF

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* seft memlock unlimited

* hard memlock unlimitedd

EOF配置ipvsadm

# k8s-master01、k8s-worker01、k8s-worker02上

sudo apt install ipvsadm ipset sysstat conntrack -y

cat >> /etc/modules-load.d/ipvs.conf <<EOF

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

systemctl restart systemd-modules-load.service修改内核参数

# k8s-master01、k8s-worker01、k8s-worker02上

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

net.ipv6.conf.all.disable_ipv6 = 0

net.ipv6.conf.default.disable_ipv6 = 0

net.ipv6.conf.lo.disable_ipv6 = 0

net.ipv6.conf.all.forwarding = 1

EOF

sysctl --system配置免密,master01节点配置

# k8s-master01上

sudo apt install -y sshpass

sudo ssh-keygen -f /root/.ssh/id_rsa -P ''

export IP="192.168.11.26 192.168.11.27 192.168.11.28"

export SSHPASS=0000

for HOST in $IP;do

sshpass -e ssh-copy-id -o StrictHostKeyChecking=no $HOST

done二、安装容器运行时,所有主机均配置

Master01上下载containerd

# k8s-master01上

sudo wget https://github.com/containerd/containerd/releases/download/v1.7.15/cri-containerd-1.7.15-linux-amd64.tar.gz

sudo scp cri-containerd-1.7.15-linux-amd64.tar.gz root@k8s-worker01:/root/.

sudo scp cri-containerd-1.7.15-linux-amd64.tar.gz root@k8s-worker02:/root/.解压containerd包

# k8s-master01、k8s-worker01、k8s-worker02上

sudo tar -xvf cri-containerd-1.7.15-linux-amd64.tar.gz -C /创建并生成配置文件

# k8s-master01、k8s-worker01、k8s-worker02上

sudo mkdir /etc/containerd

sudo containerd config default > /etc/containerd/config.toml修改Containerd的配置文件

# k8s-master01、k8s-worker01、k8s-worker02上

sudo sed -i "s#SystemdCgroup\ \=\ false#SystemdCgroup\ \=\ true#g" /etc/containerd/config.toml

sudo cat /etc/containerd/config.toml | grep SystemdCgroup

sudo sed -i "s#registry.k8s.io#k8s.dockerproxy.com#g" /etc/containerd/config.toml

sudo cat /etc/containerd/config.toml | grep sandbox_image

sudo sed -i "s#config_path\ \=\ \"\"#config_path\ \=\ \"/etc/containerd/certs.d\"#g" /etc/containerd/config.toml

sudo cat /etc/containerd/config.toml | grep certs.d

sudo sed -i 's|k8s.dockerproxy.com/pause:3.8|registry.aliyuncs.com/google_containers/pause:3.9|g' /etc/containerd/config.toml

sudo cat /etc/containerd/config.toml | grep registry.aliyuncs.com/google_containers/pause:3.9配置加速器

# k8s-master01、k8s-worker01、k8s-worker02上

sudo mkdir /etc/containerd/certs.d/docker.io -pv

sudo cat > /etc/containerd/certs.d/docker.io/hosts.toml << EOF

server = "https://docker.io"

[host."https://dockerproxy.net/"]

capabilities = ["pull", "resolve"]

EOF配置开机并启动

# k8s-master01、k8s-worker01、k8s-worker02上

sudo systemctl daemon-reload

sudo systemctl enable --now containerd.service

sudo systemctl status containerd.service三、配置集群部署,所有主机操作

下载kubernetes软件仓库和签名秘钥

# k8s-master01、k8s-worker01、k8s-worker02上

sudo mkdir -p /etc/apt/keyrings/

sudo curl -fsSL https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.30/deb/Release.key | gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

sudo echo "deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.30/deb/ /" | sudo tee /etc/apt/sources.list.d/kubernetes.list更新仓库

# k8s-master01、k8s-worker01、k8s-worker02上

sudo apt update -y查看软件列表

# k8s-master01上

sudo apt-cache policy kubeadm安装指定版本

# k8s-master01、k8s-worker01、k8s-worker02上

sudo apt-get install -y kubelet=1.30.0-1.1 kubeadm=1.30.0-1.1 kubectl=1.30.0-1.1修改kubelet配置,并配置开机自启

sudo sed -i "s|KUBELET_EXTRA_ARGS=|KUBELET_EXTRA_ARGS=\"--cgroup-driver=systemd\"|g" /etc/sysconfig/kubelet

sudo systemctl daemon-reload

sudo systemctl enable --now kubelet

sudo systemctl status kubelet重启所有主机

# k8s-master01、k8s-worker01、k8s-worker02上

sudo reboot四、集群初始化,master01上操作

查看版本

# k8s-master01上

sudo kubeadm version生成配置文件

# k8s-master01上

sudo kubeadm config print init-defaults > kubeadm-config.yaml

cat <<EOF> kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.11.26 # master节点ip

bindPort: 6443

nodeRegistration:

criSocket: unix:///var/run/containerd/containerd.sock

imagePullPolicy: IfNotPresent

name: k8s-master01 # master节点主机名

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns: {}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers # 镜像源

kind: ClusterConfiguration

kubernetesVersion: 1.30.0

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.244.0.0/16 # pod子网

scheduler: {}

---

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

cgroupDriver: systemd

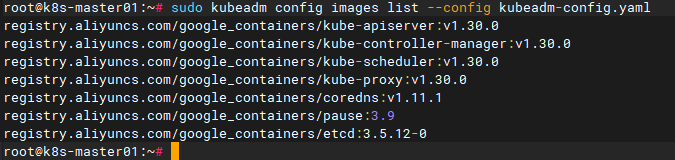

EOF查看镜像源

# k8s-master01上

sudo kubeadm config images list --config kubeadm-config.yaml

下载镜像源

# k8s-master01上

sudo kubeadm config images pull --config kubeadm-config.yaml查看镜像

# k8s-master01上

sudo crictl images初始化集群

# k8s-master01上

sudo kubeadm init --config kubeadm-config.yaml反馈信息如下,下面是正确的输出:

# k8s-master01上

root@k8s-master01:~# sudo kubeadm init --config kubeadm-config.yaml

[init] Using Kubernetes version: v1.30.0

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

W0920 11:30:14.208817 4170 checks.go:844] detected that the sandbox image "k8s.dockerproxy.com/pause:3.8" of the container runtime is inconsistent with that used by kubeadm.It is recommended to use "registry.aliyuncs.com/google_containers/pause:3.9" as the CRI sandbox image.

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master01 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.11.26]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master01 localhost] and IPs [192.168.11.26 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master01 localhost] and IPs [192.168.11.26 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "super-admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests"

[kubelet-check] Waiting for a healthy kubelet. This can take up to 4m0s

[kubelet-check] The kubelet is healthy after 501.607143ms

[api-check] Waiting for a healthy API server. This can take up to 4m0s

[api-check] The API server is healthy after 29.501582035s

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master01 as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s-master01 as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: abcdef.0123456789abcdef

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.11.26:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:625b0bc28ab07abf3f9491cdacc6baeea636a070427abeb20d7f6c453a07b7b3

root@k8s-master01:~# sudo mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config执行反馈命令

# k8s-master01上

sudo mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config在worker节点中加入集群,看集群的反馈信息,类似信息如下所示,复制命令,在worker节点执行:

# k8s-worker01、k8s-worker02上

sudo kubeadm join 192.168.11.26:6443 --token abcdef.0123456789abcdef \

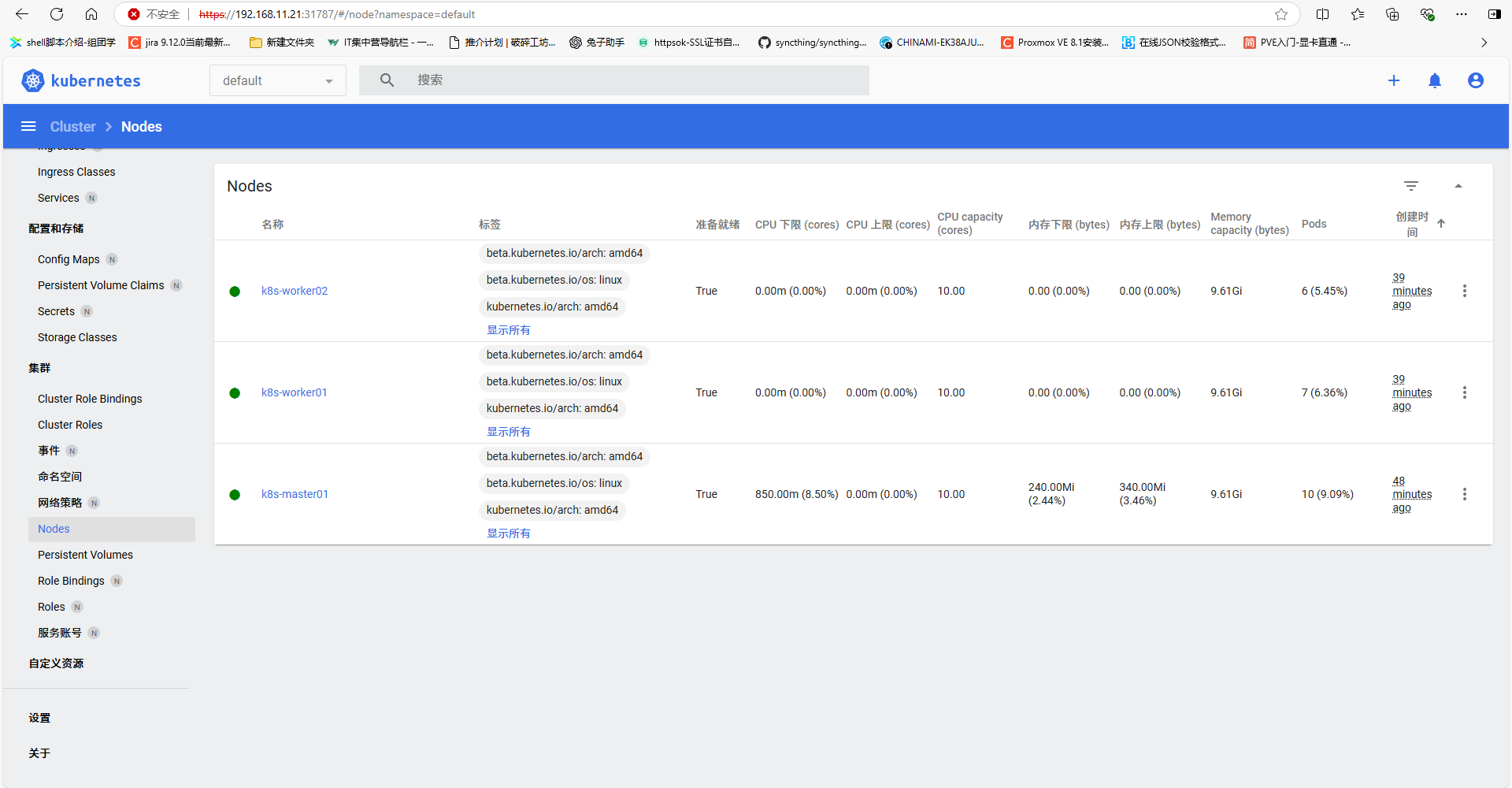

--discovery-token-ca-cert-hash sha256:625b0bc28ab07abf3f9491cdacc6baeea636a070427abeb20d7f6c453a07b7b3检查工作节点是否加入

# k8s-master01上

sudo kubectl get nodes检查pod状态,可以看到集群状态是NotReady是因为我们缺少网络插件所以接下来我们需要安装网络插件

# k8s-master01上

sudo kubectl get pods -n kube-system五、安装集群网络插件Calico,master01上操作

下载Calico的yaml文件

# k8s-master01上

sudo wget https://raw.githubusercontent.com/projectcalico/calico/v3.28.2/manifests/tigera-operator.yaml

sudo wget https://raw.githubusercontent.com/projectcalico/calico/v3.28.2/manifests/custom-resources.yaml修改custom-resources.yaml文件,把pod的网段对应到我们集群所设置的pod网段一样

# k8s-master01上

sudo sed -i "s|192.168.0.0/16|10.244.0.0/16|g" custom-resources.yaml 安装calico

# k8s-master01上

sudo kubectl create -f tigera-operator.yaml

sudo kubectl create -f custom-resources.yaml 检查是否存在Calico命名空间

# k8s-master01上

sudo kubectl get ns

## 反馈结果

root@k8s-master01:~# kubectl get ns

NAME STATUS AGE

calico-apiserver Active 153m

calico-system Active 157m

default Active 168m

kube-node-lease Active 168m

kube-public Active 168m

kube-system Active 168m

tigera-operator Active 157m检查Calico的pod是否运行,该过程初始化,可能需要10-30分钟。

# k8s-master01上

sudo kubectl get pods -n calico-system

## 反馈结果

root@k8s-master01:~# kubectl get pods -n calico-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-6c7fc8f98d-qwhxn 1/1 Running 0 156m

calico-node-5q7k7 1/1 Running 0 156m

calico-node-jcpkl 1/1 Running 0 156m

calico-node-pcrmc 1/1 Running 0 156m

calico-typha-6bbf85bcdf-x42tf 1/1 Running 0 156m

calico-typha-6bbf85bcdf-xrcnq 1/1 Running 0 156m

csi-node-driver-m7tns 2/2 Running 0 156m

csi-node-driver-nx4rq 2/2 Running 0 156m

csi-node-driver-tgl44 2/2 Running 0 156m六、安装dashboard

# k8s-master01上操作

## 下载yaml文件

sudo wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

## 应用yaml文件

sudo kubectl apply -f recommended.yaml

## 检查dashboard的暴露方式,默认为clusterip,集群外无法访问

root@k8s-master01:~# kubectl get pod,svc -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

pod/dashboard-metrics-scraper-795895d745-zjkpj 1/1 Running 0 68s

pod/kubernetes-dashboard-56cf4b97c5-qtw8r 1/1 Running 0 68s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/dashboard-metrics-scraper ClusterIP 10.102.3.11 <none> 8000/TCP 68s

service/kubernetes-dashboard ClusterIP 10.97.11.88 <none> 443/TCP 68s

## 修改暴露方式为nodeport,方便集群外访问

### 修改方式一

sudo kubectl patch svc kubernetes-dashboard --type='json' -p '[{"op":"replace","path":"/spec/type","value":"NodePort"}]' -n kubernetes-dashboard

### 修改方式二

sudo kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

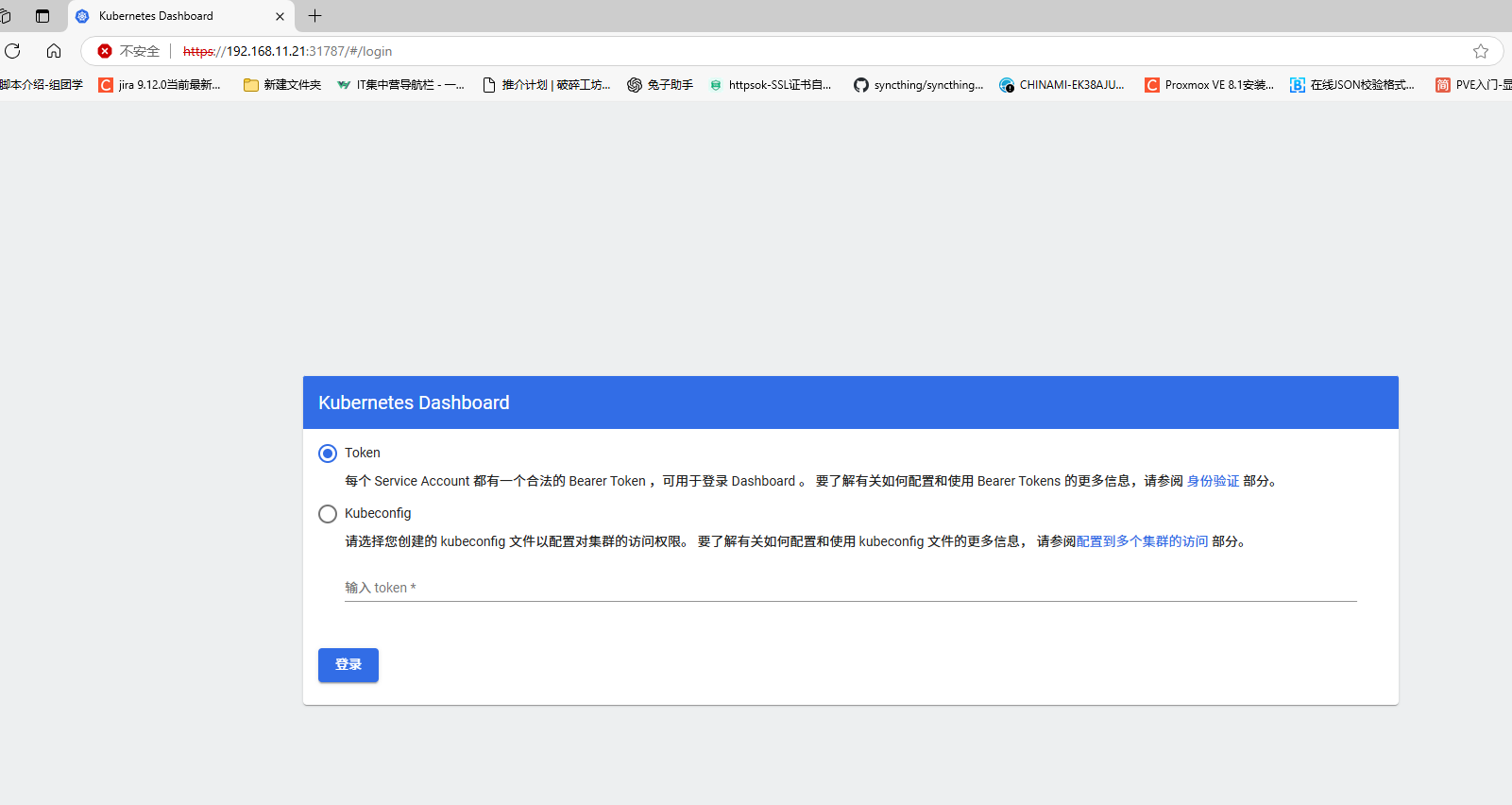

## 登陆GUI有两种方式token和kubeconfig,token比较简单,直接用token。创建ServiceAccount,并创建一个临时的token来作为登录GUI的Credential

sudo cat >k8s-dashboard.yaml<<EOF

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

EOF

### 应用ServiceAccount

sudo kubectl create -f k3s-dashboard.yaml

### 生成一个临时的token,并复制token

sudo kubectl -n kubernetes-dashboard create token admin-user

### 检查dashboard暴露端口,如下端口为:31787

root@k8s-master01:~# kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.102.3.11 <none> 8000/TCP 8m32s

kubernetes-dashboard NodePort 10.97.11.88 <none> 443:31787/TCP 8m32s浏览器访问:https://192.168.11.21:31787

七,安装metrics

# k8s-master01节点上操作

# 下载最新资源文件

sudo wget https://mirror.ghproxy.com/https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml -O metrics.yaml

# 修改资源文件

sudo sed -i '/image:/i\ - --kubelet-insecure-tls ' metrics.yaml

sudo grep "image:" metrics.yaml

# 修改成阿里 registry.aliyuncs.com/google_containers/

sudo sed -ri 's@(.*image:) .*metrics-server(/.*)@\1 registry.aliyuncs.com/google_containers\2@g' metrics.yaml

sudo grep "image:" metrics.yaml

# 安装metrics server

sudo kubectl apply -f metrics.yaml查看

root@k8s-master01:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready control-plane 86m v1.30.0

k8s-worker01 Ready <none> 85m v1.30.0

k8s-worker02 Ready <none> 85m v1.30.0

root@k8s-master01:~# kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

k8s-master01 73m 0% 1415Mi 14%

k8s-worker01 23m 0% 874Mi 8%

k8s-worker02 19m 0% 895Mi 9% 八、测试,安装一个nginx

# k8s-master01上操作

## 创建nginx的yaml文件

sudo cat <<EOF> nginx.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginxweb

spec:

selector:

matchLabels:

app: nginxweb1

replicas: 2

template:

metadata:

labels:

app: nginxweb1

spec:

containers:

- name: nginxwebc

image: nginx:latest

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginxweb-service

spec:

externalTrafficPolicy: Cluster

selector:

app: nginxweb1

ports:

- protocol: TCP

port: 80

targetPort: 80

nodePort: 30080

type: NodePort

EOF

## 执行应用

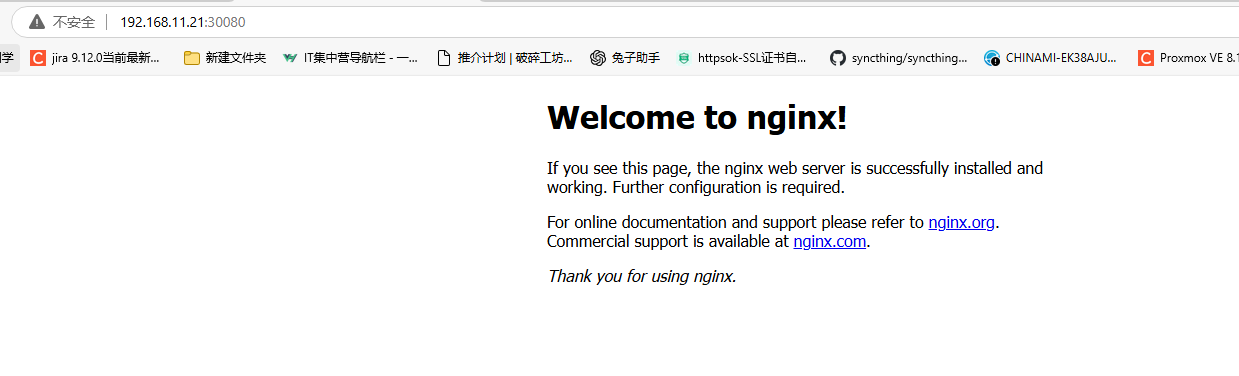

sudo kubectl apply -f nginx.yaml

## 查看是否创建成功

root@k8s-master01:~# kubectl get pod,svc -n default

NAME READY STATUS RESTARTS AGE

pod/nginxweb-55dcdbb446-r547w 1/1 Running 0 2m5s

pod/nginxweb-55dcdbb446-r87j2 1/1 Running 0 2m5s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 53m

service/nginxweb-service NodePort 10.101.95.21 <none> 80:30080/TCP 2m5s浏览器测试,http://192.168.11.21:30080

九、其他配置:安装命令补全等

安装命令补全

# k8s-master01上

sudo apt install bash-completion -y

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

sudo echo "source <(kubectl completion bash)" >> ~/.bashrc

评论区